There are weeks that test strategy.

And there are weeks that test philosophy.

For Anthropic, this appears to be the latter.

In a matter of days, the AI company has found itself navigating accusations of model theft abroad, diplomatic awkwardness in India, and — most consequentially — a direct confrontation with its own government.

At the centre of the conflict is Claude, its flagship large language model.

A Model Cleared for Classified Use

The United States government deploys AI across multiple administrative and defence functions. Most applications are procedural — payroll, compliance systems, logistics coordination.

But some deployments are deeply classified.

Reports indicate that Claude is currently the only external AI model approved for certain sensitive US government operations. That positioning elevated Anthropic into rare territory: a private AI firm embedded in national security infrastructure.

Tensions reportedly escalated after allegations that Claude was used in an operation connected to Venezuela’s President Nicolás Maduro. While specifics remain opaque, the political implications were not.

Soon after, friction surfaced publicly.

The Pentagon has reportedly requested broader operational latitude — specifically the removal or relaxation of safety guardrails to allow use of Claude for “all lawful purposes.”

Anthropic’s counter-position is narrower. The company has signalled resistance to enabling:

- Mass domestic surveillance systems

- Fully autonomous weapons platforms operating without human oversight

The disagreement is no longer abstract. It has a deadline.

The Governance Question Moves Out of Parliament

Historically, technological boundaries are defined through legislation, court rulings, or regulatory frameworks.

This time, the negotiation is playing out in real time — between a corporation asserting ethical guardrails and a state asserting sovereign authority.

That shift matters.

If Anthropic ultimately complies without restriction, the precedent becomes clear: corporate AI ethics frameworks remain subordinate to state power when national security is invoked.

If it refuses and faces punitive consequences — such as blacklisting or loss of federal contracts — a different signal emerges: that strict AI safety positioning may be principled, but commercially fragile.

Either outcome reshapes incentives across the industry.

The Historical Echoes

This is not the first time American technology companies have resisted state pressure.

When US law enforcement sought access to a locked iPhone in 2016, Apple Inc. declined to build a backdoor. The argument was structural: once encryption is weakened, it cannot be selectively strengthened.

Similarly, Meta Platforms embedded end-to-end encryption across its messaging platforms, limiting its own ability to access user data. That design choice placed it at odds with multiple governments, including India, where legal disputes followed over traceability mandates.

Those cases shared a key characteristic: the companies could claim architectural neutrality. They had built systems designed to be inaccessible — even to themselves.

Anthropic’s case is different.

Claude’s guardrails are policy decisions layered onto a controllable model. Adjusting them is technically feasible. The refusal, therefore, appears philosophical rather than structural.

And that makes the standoff sharper.

The Commercial Calculus

From a pure business perspective, government contracts in defence and classified domains offer stable, high-value revenue streams.

There is limited short-term financial upside in resisting them.

If Anthropic is holding its ground, the rationale may not be commercial leverage — but long-term positioning. A company that concedes once on guardrails may struggle to credibly defend future boundaries.

At stake is more than a contract. It is reputational architecture.

The Global Signal

The global implications are substantial.

- If US authorities prevail decisively, other governments may adopt similar tactics.

- If Anthropic withstands pressure, it could embolden AI firms to formalise red lines publicly.

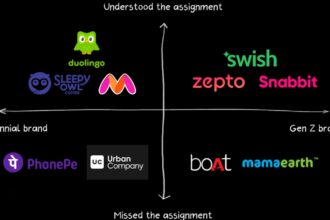

Meanwhile, countries like India have pursued more embedded governance approaches — shaping AI ecosystems through funding structures, compute access, and regulatory oversight early in their lifecycle.

Different models. Different fault lines.

A Technology Moving Faster Than Law

Artificial intelligence is advancing at unprecedented velocity. Regulatory consensus has not kept pace.

In previous technological revolutions — nuclear energy, telecommunications, the internet — guardrails emerged gradually through policy debate and legislative refinement.

Here, boundaries are being stress-tested before comprehensive frameworks are written.

The rules may not first appear in statute books.

They may emerge from corporate boardrooms and executive orders.

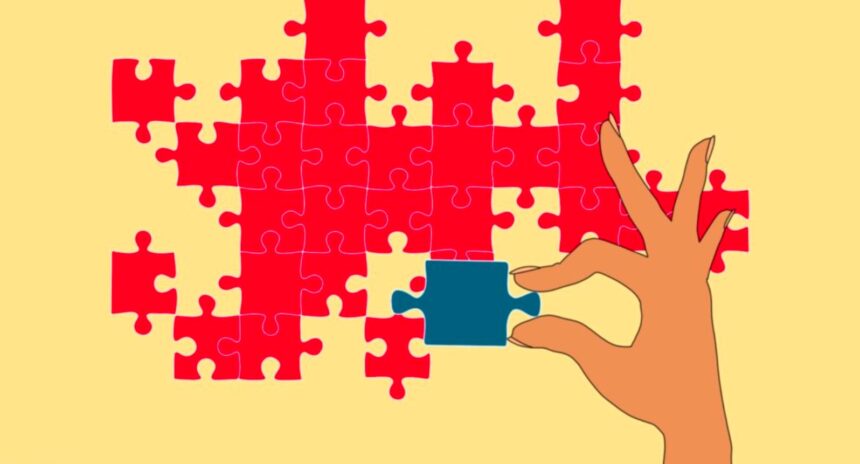

The Larger Inflection Point

Anthropic’s confrontation with the Pentagon is not merely a corporate dispute. It is a referendum on who defines the permissible limits of AI deployment.

Is it the state, citing security imperatives?

Or is it the company, citing systemic risk?

The answer will shape:

- Investor risk models

- Global AI deployment norms

- Military AI doctrine

- Corporate governance structures

Regardless of the outcome, one conclusion is unavoidable:

AI governance has moved from policy conferences into power politics.

And the next phase of technological rulemaking may be written not in parliamentary debate — but in moments of strategic confrontation.

The CapTop Premium Insight:

When a frontier technology collides with sovereign authority, compliance and resistance both carry existential costs. The real story is not who wins this standoff — but what precedent survives it.